Third-Party Risk Management: The Complete 2026 Guide

By Visualping Editorial Team

Updated April 21, 2026

Third-party risk management is how a company governs the risk that comes from the vendors, processors, and fourth-party dependencies it buys from. The work sits between procurement, compliance, security, and the business owners who actually use each vendor. A well-run program surfaces the issues that cause incidents before they become incidents. A poor one produces a file of annual questionnaires nobody reads.

This guide is written for practitioners running or rebuilding a TPRM program in 2026. It covers the definition and ownership model, the regulatory backdrop, the lifecycle, the four major frameworks, the policy, the three-layer stack most programs actually need, the operating model, and the metrics that hold up in a board meeting. The spokes linked throughout go deeper on onboarding, due diligence, risk assessment, software selection, and continuous monitoring.

What third-party risk management is

Third-party risk management, or TPRM, is the program of policies, processes, and tooling an organization uses to identify, assess, monitor, and respond to the risks created by the external parties it relies on. "Third party" covers more than "vendor". It includes software providers, cloud services, data processors, outsourced operations, staffing agencies, consultants, service integrators, and any other entity with access to systems, data, customers, or brand. Fourth parties, the subcontractors your vendors rely on, are in scope.

The risks fall into consistent categories. Cybersecurity. Data privacy. Operational resilience. Financial stability. Regulatory and legal exposure. Reputational risk. Concentration risk when one provider sits behind too much of the business. Geopolitical risk when a supplier is exposed to sanctions or jurisdictional shifts. A full program handles all of these, not only the cyber slice.

Ownership is usually shared. Procurement runs intake and contract lifecycle. Security runs technical assessments. Compliance owns the regulatory view. Legal handles DPAs and indemnification. The business owner inside the buying team is accountable for the relationship. A central TPRM function, reporting into GRC or the CISO, orchestrates the whole thing and maintains the vendor inventory, the risk register, and the reporting stack. Without a named owner, TPRM becomes a questionnaire factory with no escalation path. When ownership is well-defined, each group knows what they own and what they hand off. The spoke on vendor risk assessment walks through the RACI most programs end up with.

Why TPRM matters in 2026

The regulatory pressure is heavier than it has been in years, and the attack surface keeps expanding.

DORA, the EU Digital Operational Resilience Act, has been enforceable since January 2025. It requires in-scope financial entities to maintain a register of all ICT third-party service providers, classify contracts by criticality, run regular resilience testing, and give regulators direct inspection rights over critical providers. The registers alone have forced banks to reconcile contract data that had sat fragmented across procurement systems for years.

In the United States, the SEC cyber disclosure rule now requires public companies to disclose material cyber incidents within four business days and describe cyber risk management, including third-party dependencies, in their annual 10-K. The OCC, FDIC, and Federal Reserve issued Interagency Guidance on Third-Party Relationships in June 2023 that consolidates federal bank regulator expectations. State privacy laws (CCPA, CPRA, VCDPA, CPA, and their peers) carry flow-down obligations into every processor relationship.

Meanwhile, vendor change has accelerated far past annual-review cadence. A program running on annual questionnaires is reading a vendor snapshot from a year ago while that vendor has restructured a data flow, added a new processor, and updated its breach-notification clause. Across 1,132 customer-configured sub-processor monitors, 67 percent recorded a change over a 90-day window. Privacy policies moved at 41 percent over the same window. Trust centers moved at 62 percent. That is the gap TPRM is now expected to close.

Incident frequency adds to the pressure. The breach-came-through-a-vendor pattern is now the default incident story. Ransomware groups have moved up-stack, hitting managed service providers and software supply chains to reach many customers through one intrusion. The programs that can answer with data move up the board priority list.

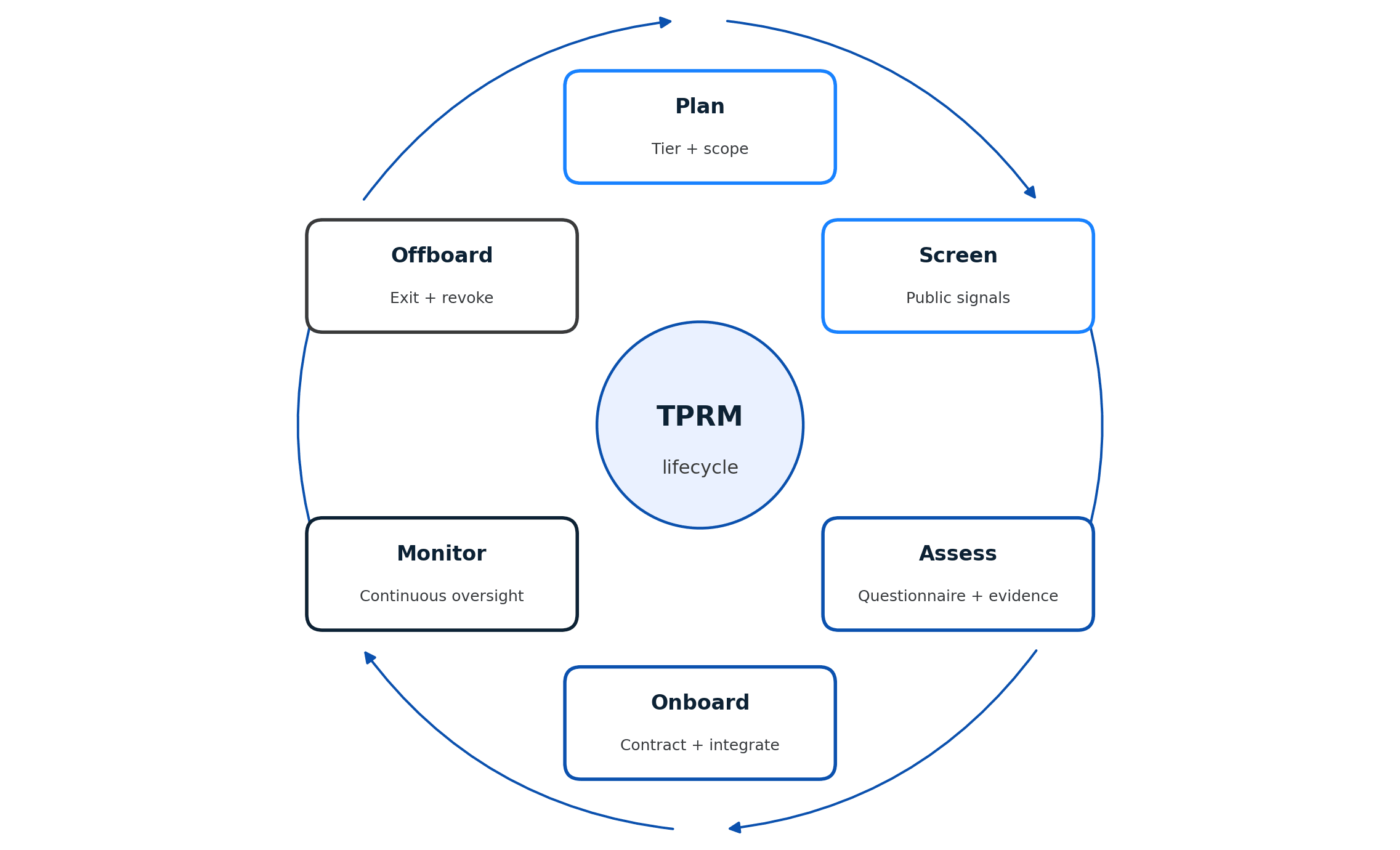

The TPRM lifecycle: six stages

The lifecycle is the backbone of the program. Different frameworks use different labels, but the flow is consistent: plan, screen, assess, onboard, monitor, offboard.

Plan. Before a vendor search begins, the business owner and procurement define what the relationship needs to do, what data or access is involved, and what tier of criticality it represents. Tiering at intake saves work later. A tier-1 vendor handling regulated data goes through full due diligence. A tier-4 marketing tool with no access to customer systems gets a lighter path. Without tiering, every vendor is treated the same and the program stalls.

Screen. Initial risk screening uses public signals: cyber ratings, sanctions and watchlist checks, adverse media, financial solvency indicators, prior breach history. Screening filters out vendors that should not reach a full assessment. The sub-processor selection piece covers how to screen upstream dependencies at the same time, because a vendor's sub-processor list often introduces risk its own questionnaires do not surface. Concentration and geographic exposure are flagged here too.

Assess. Full due diligence for everything that passes screening. This is where questionnaires (SIG, CAIQ, or custom), evidence review (SOC 2, ISO 27001, pen test summaries, insurance certificates, DPAs), and technical assessments sit. The vendor due diligence checklist covers the document set and how to prioritize when a vendor is dragging. The output is a documented risk decision: approve, approve with conditions, reject, or defer.

Onboard. Contracting, access provisioning, security integration (SSO, logging, key management), business owner acknowledgement, and placement into the ongoing monitoring plan. The vendor onboarding spoke details the handoff, including the fields a TPRM system needs from procurement to populate its records.

Monitor. Continuous oversight of the vendor post-contract. This is the stage most programs under-instrument. Monitoring includes cyber-rating deltas, sub-processor changes, trust-center updates, privacy and terms-of-service revisions, certificate expirations, financial health signals, and regulatory action filings. The continuous vendor monitoring spoke covers the signal architecture and alert routing in detail.

Offboard. Contract termination, data return or destruction, access revocation, key rotation, and a documented exit assessment. Vendors that leave quietly leak the longest. An offboarding checklist with owner sign-off is one of the simplest controls in the lifecycle.

The stages loop. Monitoring triggers re-assessment. Re-assessment sometimes triggers a tier change, which changes the monitoring plan. A well-run program is never "done" with a vendor until the vendor is out.

Frameworks: NIST, FFIEC, OCC, and ISO

Four framework families cover most of what TPRM programs reference. Mature programs use two or three in combination.

NIST SP 800-161r1 Rev. 1 (Cybersecurity Supply Chain Risk Management Practices). The federal reference for supply-chain risk management. It defines C-SCRM practices, integrates with NIST 800-53 controls, and is the most common backbone for US federal contractors and regulated technology vendors. It pairs well with NIST CSF 2.0, which added a Govern function that formalizes supply-chain oversight at the executive level.

FFIEC IT Examination Handbook. The banking reference. The Outsourcing Technology Services booklet and the Business Continuity booklet set the federal examination expectations for bank third-party relationships. The FFIEC material is dense and examiner-facing, but it remains the most detailed operational guide for program maturity.

OCC / FDIC / Federal Reserve Interagency Guidance (June 2023). This consolidated prior OCC, FDIC, and FRB guidance into a single document covering the third-party risk lifecycle for federally regulated banks. It is not framework-heavy, but it is authoritative. Anything a bank program does should be traceable back to its sections on planning, due diligence, contracting, ongoing monitoring, and termination.

ISO 27036 (Information security for supplier relationships). The international standard, organized into four parts (overview, requirements, cloud services guidance, and hardware guidance). ISO 27001 certified programs use it to structure supplier controls and are often required to by customer contracts. Outside financial services and US federal, ISO 27036 is the most common benchmark.

Industry-specific references matter too. The Shared Assessments SIG and CSA CAIQ are questionnaire standards, not frameworks. HITRUST CSF is common in healthcare. CMMC governs the US defense supply chain. DORA in the EU is regulation, not framework, but it operationalizes Supervisory Expectations in a way that reads like a framework.

Programs rarely pick one. The pattern is a primary control framework (NIST 800-53 or ISO 27001) plus a sector-specific regulatory reference (FFIEC, OCC Interagency, DORA, or HIPAA) plus questionnaire standards (SIG or CAIQ) plus the internal policy that knits them together. The vendor risk management software guide covers how platforms map to these frameworks in practice.

TPRM policy essentials

A TPRM policy is the internal document that codifies the program. It is the thing auditors read first and the thing that gets cited in every escalation. A useful policy covers seven sections.

Scope. Which relationships, data classifications, and business units the policy covers. Scope should be explicit about what is in and what is out, because ambiguity here is what creates orphaned vendors.

Definitions. Third party, fourth party, critical vendor, regulated data, concentration risk, and the tiering scheme itself. Sharp definitions prevent argument at tier-determination time.

Roles and responsibilities. A RACI by lifecycle stage. Who approves, who reviews, who executes, who gets informed. Name the function, not the person.

Risk tiering. Tier criteria, tier definitions, and the matrix that maps data access, criticality, and regulatory exposure to a tier. Tiering drives every downstream workflow: questionnaire depth, monitoring cadence, re-assessment interval.

Process. The lifecycle stages, the artifacts required at each stage, and the approval gates. This is the operational section. It should point at the playbooks and templates rather than inline them.

Exceptions. How exceptions are raised, approved, documented, and reviewed. Exceptions that bypass the policy with no record are the single most common finding in internal audit reviews.

Review cadence. When the policy itself is reviewed, who signs off, and what triggers an out-of-cycle refresh (regulatory change, major incident, organizational change). Annual plus event-driven is the standard.

The companion spoke on the TPRM policy template includes a section-by-section template and an editable version. The policy is a short document. Twenty pages for a tier-1 financial services program, ten pages for most others. Anything longer is absorbing procedural detail that belongs in playbooks.

The TPRM stack: three layers

Most TPRM programs end up running three distinct categories of tooling. Each layer answers a different question. None of them covers the full surface alone.

Layer 1: Questionnaires and GRC. Evidence collection (SOC 2, ISO, DPAs, insurance, pen test summaries), structured questionnaires (SIG, CAIQ, custom), risk scoring, the vendor risk register, attestation workflow, and board reporting. OneTrust, ProcessUnity, Venminder, Archer, and MetricStream live here. Layer 1 is where the program's authoritative record lives. It is also the most expensive to implement and the slowest to update: each data point requires the vendor to act.

Layer 2: Cyber ratings. External cyber posture scoring, continuously. BitSight, SecurityScorecard, Black Kite, and UpGuard sit in this layer. Cyber ratings answer what the vendor's exposure looks like from the outside, continuously. They catch issues the vendor has not disclosed and track posture drift over time. Ratings are directionally useful, noisy on point scores, and not a substitute for the evidence review in Layer 1.

Layer 3: Documentation-surface monitoring. Automated tracking of the pages vendors publish about themselves: sub-processor lists, trust centers, privacy policies, terms of service, certifications, status pages, and security documentation. These pages are the vendor's contemporaneous statement of how they operate. They move often. Across a broader sample of 7,551 privacy policies, 41 percent recorded a change in a 90-day window. Trust centers moved at 62 percent.

Editorial disclosure. Visualping is our product. We built the Layer 3 category, and we are biased. If the program's priority is questionnaire automation with GRC workflow and board-grade attestation, OneTrust or ProcessUnity is the stronger pick. If the priority is continuous cyber rating across a large vendor portfolio, BitSight or SecurityScorecard fits better. Visualping does not issue questionnaires, score cyber posture, or run a risk register. It watches the pages vendors publish and routes changes to the right owners. The Limitations section of each category, including ours, describes where each one leaks.

All three layers address different parts of the same question: "is this vendor still the vendor we assessed?" Questionnaires answer it at contract time and on renewal. Cyber ratings answer it continuously, from the network edge. Documentation monitoring answers it at the vendor's own disclosure surface. Programs that run only Layer 1 find out about material changes months late. Programs that layer all three get the full picture. See Layer 3 monitoring in practice.

Operating model: one, two, three lines of defense

The three-lines model is the standard operating pattern for risk programs, and it fits TPRM cleanly. The question is whether the lines are distinct, or whether the program has quietly collapsed two of them and called it efficiency.

First line: the business owner and procurement. The business owner is the person who depends on the vendor to deliver their work. They are accountable for the relationship: performance, issues, exception requests, and renewal decisions. Procurement supports intake, contracting, and commercial terms. The first line generates the demand for TPRM activity and consumes the output.

Second line: TPRM, compliance, and enterprise risk. The second line owns the program. Policy, tiering, assessments, risk decisions, the vendor register, monitoring operations, metrics and reporting. The second line challenges first-line decisions when the risk evidence warrants it. It sets the standard and enforces it.

Third line: internal audit. The third line tests whether the first and second lines are doing what the policy says they do. It is independent, periodic, and evidence-based. A third line that simply agrees with the second line is not independent.

The failure pattern most programs see is a TPRM function that drifts into first-line execution. When TPRM is the one chasing questionnaires, organizing evidence, and negotiating exceptions with vendors, it is doing work the first line should own. That is how second-line judgment gets crowded out. The fix is sharp RACI, alerts routed to the accountable owner, and escalation criteria written in the policy.

Alert routing is the part of the operating model programs most consistently underinvest in. A continuous-monitoring signal that lands in a TPRM queue creates work for the second line. The same signal routed to the business owner with a clear "acknowledge or escalate" prompt creates accountability in the first line. For regulated processes, a copy should land with compliance. Routing logic belongs in the policy and the playbook, not in individual analysts' inboxes.

Metrics and reporting

Program metrics answer three questions: does the program have coverage, does it work at the right pace, and is it catching what it should.

Coverage metrics. Percentage of vendors inventoried, percentage of tier-1 and tier-2 vendors with current due diligence, percentage of critical vendors covered by monitoring, percentage of contracts with current DPAs. Coverage numbers mean nothing if the denominator is the 40 vendors the program happens to track rather than the full inventory. Report against the full inventory or do not report coverage.

Timeliness metrics. Median days from intake to risk decision, median days to complete an annual questionnaire, time-to-remediation for critical findings, time-to-acknowledge for alerts. These show up in regulatory exams and internal audit. Most programs struggle with them because the data is scattered across procurement, GRC, and ticketing systems that do not share identifiers.

Change-signal metrics. Alerts generated per quarter, alerts that led to an escalation, alerts acknowledged by the business owner, alerts that triggered a re-assessment. These are the metrics that prove the monitoring layer is producing signal instead of noise. Roughly 1 in 16 sub-processor checks on monitored pages catches a change, across a 30-day window and 20,898 checks. Use that ratio as a tuning benchmark: tune when the ratio drifts significantly in either direction.

Board reporting is where metric design meets judgment about what the board actually needs to see. The board does not want a raw dashboard. It wants the three or four numbers that tell them whether the program is holding, where the risk is concentrated, and what changed this quarter. A useful board report has four elements: a coverage number, a timeliness number, the incident or near-miss list for the quarter, and the forward view, which is what the program is watching and why. Anything else is detail that belongs in the full program report.

Regulators want more. Exam teams, audit committees, and internal audit expect the evidence chain: the policy, the tiering, the inventory, the individual risk decisions, the monitoring output, and the exception log. The reporting stack should produce both views from the same underlying data. The register and monitoring outputs need to live in systems that export evidence on request.

Case patterns and where to go next

Two program patterns cover most of what TPRM looks like in practice in 2026.

Banks and regulated financial services lean on the Interagency Guidance and FFIEC material for structure, run Layer 1 heavy with a named GRC platform, and are adding Layer 3 documentation monitoring to close the between-assessment gap. The Interagency Guidance's emphasis on "ongoing monitoring" has been the main catalyst for that shift. The banking spoke on regulatory compliance monitoring covers how examiners read those signals.

SaaS and technology companies operating in the EU have had to rebuild vendor registers and contract clauses for DORA, which pulled documentation governance to the center of TPRM. Programs that had treated sub-processor pages as a compliance footnote are now monitoring them as a first-class signal, because the registers have to stay current. The Salesforce sub-processors walkthrough shows what a well-governed sub-processor surface looks like, and what is a sub-processor is the primer for teams newer to the model.

The five spokes anchored to this pillar go deeper on each operational slice of the program. The continuous vendor monitoring piece covers the signal architecture and alert routing. The vendor onboarding spoke details the intake-to-production handoff and the fields TPRM needs from procurement. The vendor risk assessment walkthrough covers tiering logic and assessment depth by tier. The vendor risk management software comparison covers the three-layer tool market and how to shortlist by layer. The vendor due diligence checklist documents the artifact set collected at assessment and how to prioritize when timelines slip.

Two additional spokes round out the cluster. The horizon-scanning regulatory intelligence piece covers how TPRM teams track regulatory change beyond their own vendor surface. The automation workflows walkthrough documents the alert-routing patterns most programs end up building, regardless of platform.

Frequently asked questions

What is the meaning of third-party risk management?

Third-party risk management is the discipline of identifying, assessing, monitoring, and responding to the risks a company inherits from its vendors, processors, suppliers, and other external parties. It covers cybersecurity, privacy, operational, financial, regulatory, and reputational risk across the vendor lifecycle, from intake through offboarding. The goal is to keep the organization's risk posture aligned with its policy and regulatory obligations while its vendor base changes.

What are the five phases of third-party risk management?

Most framework literature describes five core phases: planning and tiering, due diligence and assessment, contracting and onboarding, ongoing monitoring, and termination or offboarding. Some references split monitoring into two phases (performance monitoring and risk monitoring), producing a six-phase model. The underlying flow is consistent: understand the relationship, qualify the vendor, contract and integrate them, watch them while they are in scope, and exit cleanly when the relationship ends.

What is an example of a third-party risk?

A common example: a SaaS vendor quietly adds a new sub-processor in a jurisdiction your data transfer agreement does not cover. Your DPA is silently out of compliance. If an incident occurs involving that sub-processor, you are on the hook for a data transfer you never authorized. The vendor's sub-processor page shows the change the day it happens, but most programs only review those pages annually. Other common examples: a vendor's SOC 2 expires without renewal, a critical vendor's cyber rating drops sharply after a breach, a vendor's financial condition deteriorates toward insolvency, a vendor's privacy policy updates in a way that weakens your contractual protections.

What are the four core third-party risk types?

The four most commonly cited: cybersecurity and data security, operational resilience, compliance and regulatory, and financial or reputational. Most mature programs track more than four, adding concentration, geopolitical, and ESG risk as separate categories, but the core four are the ones every framework covers.

How is third-party risk management different from vendor risk management?

Third-party risk management is the broader term. Vendor risk management, or VRM, typically refers to the assessment and monitoring of commercial suppliers. TPRM includes VRM and extends to non-vendor third parties: processors, subcontractors, joint-venture partners, affiliates, data recipients, and fourth parties. In day-to-day use, the terms overlap, and most practitioners treat them as synonymous. For regulatory work, TPRM is the term supervisors and framework documents use.

Who owns the TPRM program?

Ownership varies by industry and company size. In banks and large regulated firms, a dedicated TPRM function inside GRC or the CISO organization owns the program and coordinates procurement, security, compliance, and legal. In mid-market companies, the program usually sits inside security or compliance with procurement running intake. In smaller firms, a single risk or security lead owns TPRM alongside other responsibilities. In every model, the principle is the same: a named function owns the policy, the inventory, and the monitoring output, and business owners are accountable for their individual vendor relationships.

Add documentation-surface monitoring to your TPRM stack

Visualping watches the pages vendors publish about themselves (sub-processors, trust centers, privacy, ToS, certifications) and routes changes to the right owners, so your program stays current between questionnaires.

Visualping Editorial Team

The Visualping Editorial Team covers third-party risk management, regulatory compliance monitoring, and the operational systems that keep them current.