Vendor Risk Assessment: The Complete Guide + Template

By The Visualping Team

Updated April 17, 2026

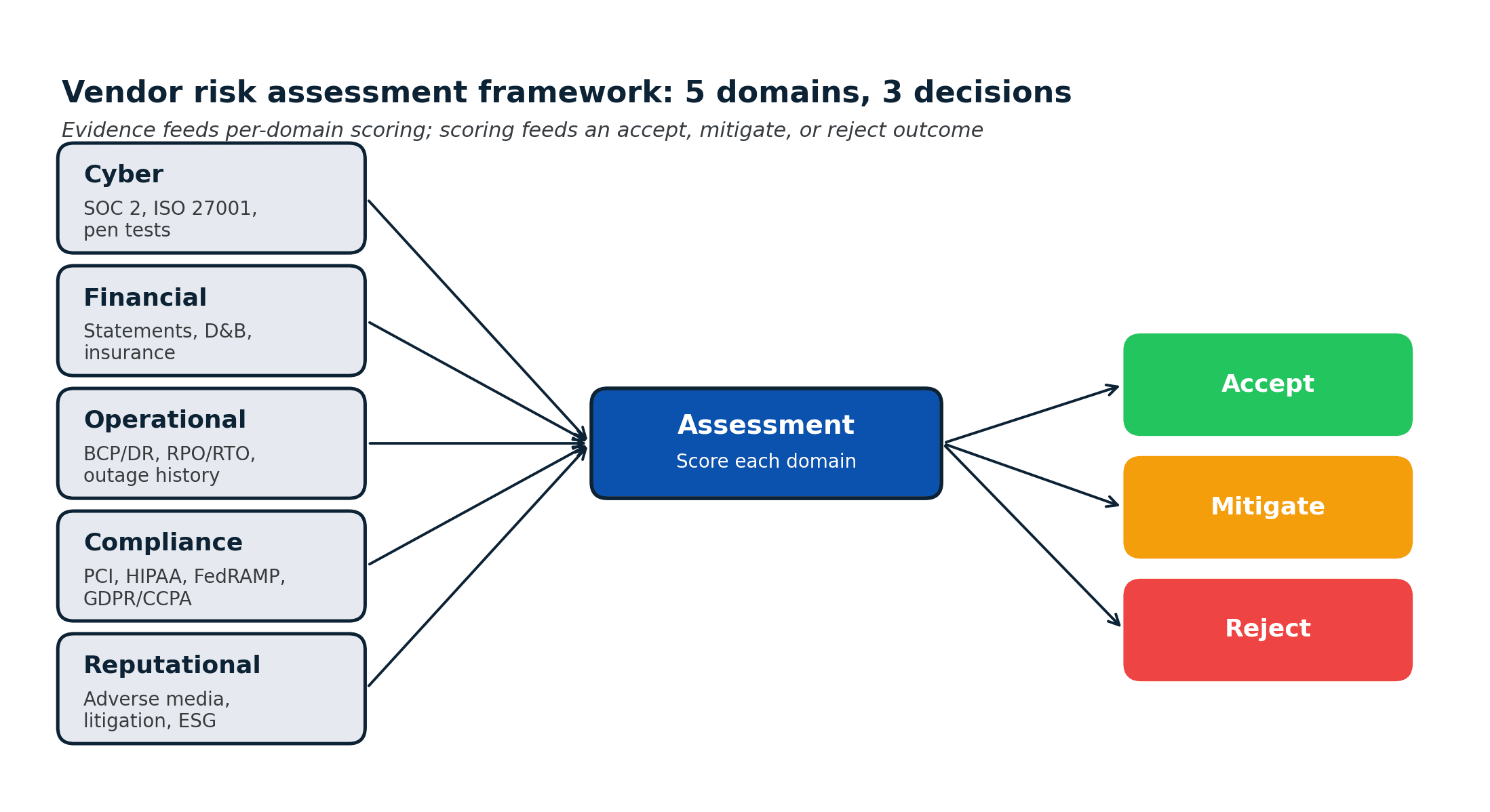

This guide covers the practical mechanics of vendor risk assessment: when to run one, the 5-domain risk framework, the step-by-step process, the downloadable template, software tiers by company size, and where most programs quietly break.

A vendor risk assessment is the structured evaluation you run before (and periodically after) bringing a third party into systems that touch your data, your customers, or your balance sheet. Done well, it produces a defensible risk register entry and a clear accept/mitigate/reject verdict. Done poorly, it produces a PDF nobody re-reads until something goes wrong.

Most programs under-invest in the first half (the methodology) and over-invest in the second half (filling out the questionnaire). This guide flips that weighting. The goal is a vendor risk assessment that is fast enough to actually run on every tier-1 vendor, reliable enough to defend to auditors, and connected enough to continuous monitoring that it keeps producing value long after the contract is signed.

In brief

- A vendor risk assessment is a point-in-time evaluation of a third party's risk across five domains: cyber, financial, operational, compliance, and reputational.

- Run one at onboarding, on renewal, when scope changes, after an incident at the vendor, and whenever a material change is detected in ongoing monitoring.

- The 5-step process is: scope and tier, collect evidence, score risk by domain, decide (accept/mitigate/reject), document and enroll in monitoring.

- Use an existing template (SIG Lite, CAIQ) rather than building one. Customize only where your industry genuinely requires it.

- The assessment is the start, not the end. Static risk pictures decay within a 90-day window: 67% of 1,132 sampled sub-processor pages and 41% of 7,551 privacy policies changed within that period on our platform.

What a vendor risk assessment actually is

A vendor risk assessment is a structured evaluation of a third party against your control requirements. It produces:

- A risk score per domain (cyber, financial, operational, compliance, reputational).

- A decision (accept, accept with mitigation, reject).

- A risk register entry that names the residual risk, the owner, the mitigation, and the review cadence.

- A handoff artifact to procurement, legal, IT, and AP so the onboarding flow can complete.

It is not the same as:

- Vendor due diligence, which is the evidence-collection step feeding into the assessment.

- Vendor onboarding, the end-to-end flow that includes the assessment as one stage. The risk-led vendor onboarding guide walks through the 7-stage process.

- Continuous vendor monitoring, the ongoing detection of changes after the assessment is complete. The continuous vendor monitoring guide covers the post-assessment layer.

Think of the assessment as the gate. Due diligence produces the evidence the gate evaluates. Monitoring watches what happens after the gate closes.

When to run a vendor risk assessment

Most programs default to "at onboarding and at renewal." That is not enough. Use this six-trigger list instead:

- Onboarding, before contract execution.

- Contract renewal, typically annually for tier-1 vendors.

- Scope change: the vendor starts processing new data categories, expanding to new regions, or accessing new systems.

- Incident at the vendor, including breach disclosures, SEC filings, or material press coverage.

- Material change in ongoing monitoring: sub-processor additions, DPA amendments, certification lapses, trust center changes.

- Regulatory trigger: new obligations (DORA, SEC cyber rule, EU NIS2, state privacy laws) that require re-examination of existing vendor relationships.

Triggers 1 and 2 are calendar-driven. Triggers 3-6 are event-driven. Programs that handle only the calendar triggers have blind spots that compound over time.

The 5-domain risk framework

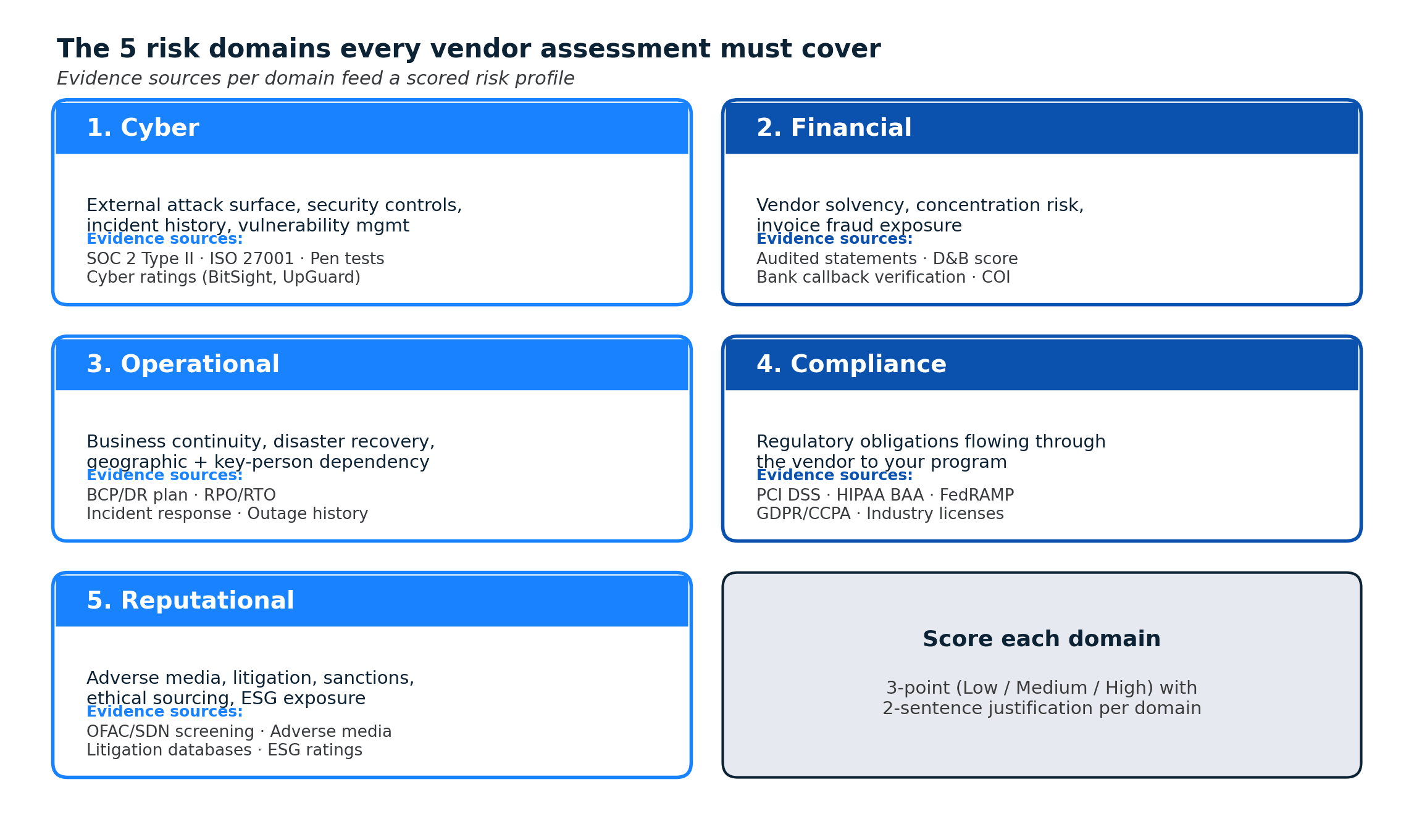

Every credible assessment framework (SIG, CAIQ, NIST, ISO 27036) converges on a small number of risk domains. Use these five:

1. Cyber risk

External attack surface, security controls, incident history, vulnerability management, and supply chain cyber posture. Evidence sources: SOC 2 Type II, ISO/IEC 27001, penetration test summaries, breach history. Scoring inputs: cyber ratings platforms (BitSight, SecurityScorecard, UpGuard) plus self-attestation.

2. Financial risk

Vendor solvency, concentration risk (over-reliance on a small customer base), and payment / invoice fraud exposure. Evidence sources: audited financial statements, D&B score, bank verification callbacks, insurance certificates. High-stakes for vendors with multi-year contracts or hard-to-migrate dependencies.

3. Operational risk

Business continuity, disaster recovery, geographic concentration, and key-person dependency. Evidence sources: BCP/DR plan, RPO/RTO commitments, incident response procedure, public outage history.

4. Compliance risk

Regulatory obligations that flow through the vendor to your program. Evidence sources: PCI DSS attestation, HIPAA BAA, FedRAMP authorization, GDPR / CCPA posture, industry-specific licenses. Programs that map to the NIST Cybersecurity Framework 2.0 typically tie these evaluations to the GV.SC and ID.SC functions.

5. Reputational risk

Adverse media, litigation history, sanctions screening, ethical sourcing, ESG exposure. Evidence sources: OFAC/SDN screening tools, adverse media feeds, litigation databases, ESG ratings. Weight varies by industry: regulated and public-facing industries weight this domain heavily; internal-facing SaaS can weight it lightly.

The vendor risk assessment process (5 steps)

This is the step-by-step sequence that holds up at both 30 vendors and 3,000. Each step has an owner and a clear exit artifact.

Step 1: Scope and tier

Owner: procurement coordinator or TPRM analyst. Exit: vendor assigned to tier 1 / 2 / 3, with scope documented.

Scope captures what the vendor will touch: data categories, systems, regions, contract value, criticality. Tiering is the filter that decides how much assessment depth applies. Tier 3 (commodity, no data access) gets a 1-page check. Tier 1 (regulated or sensitive data, critical function) gets the full framework below.

A common mistake is running a full assessment on every vendor. Tiering exists so you don't.

Step 2: Collect evidence

Owner: TPRM coordinator. Exit: complete evidence package in the file system.

Pull from the vendor's public trust portal or evidence room first; send a custom questionnaire only for gaps. Using an existing industry questionnaire (SIG Lite, CAIQ) saves weeks of negotiation because most vendors already have responses prepared.

The vendor due diligence checklist has the full 30-item artifact list. The short version: SOC 2, ISO 27001, DPA, sub-processor list, insurance, financial statements, BCP/DR, privacy policy, certifications, and industry-specific attestations.

Step 3: Score risk by domain

Owner: security and privacy. Exit: a risk score per domain with supporting evidence.

Score each of the five domains on a 3- or 5-point scale (Low / Medium / High works fine; avoid false-precision 10-point scales that auditors ignore). Document the evidence behind each score. A 2-sentence justification per domain is enough for tier 1 vendors.

Use sub-processor lists as a concrete example: a vendor with 50 sub-processors in 12 jurisdictions scores higher cyber and compliance risk than one with 3 sub-processors in a single jurisdiction. Same underlying product, very different aggregate risk profile.

Step 4: Decide

Owner: TPRM lead, with input from legal, security, and the business owner. Exit: written decision (accept / accept with mitigation / reject) and any required contract clauses.

The decision balances the scored risk against the business value. Common outputs:

- Accept: risk is within tolerance, move to contract.

- Accept with mitigation: specific contract clauses, technical controls, or compensating controls required before go-live.

- Compensating control request: vendor does not meet the standard but has a documented alternative control that reduces risk to tolerance.

- Reject: find an alternative vendor.

Programs with a transparent decision rubric move faster and defend better to auditors than programs that leave it to individual judgment.

Step 5: Document and enroll in monitoring

Owner: TPRM coordinator. Exit: risk register entry + continuous monitoring enrolled.

The final step is what separates a working program from a paper program. Document the decision, the owner, the review cadence, and the compensating controls in the risk register. Then enroll the vendor's DPA, sub-processor list, trust center, and ToS in continuous monitoring so the assessment captures drift, not just a frozen snapshot.

This is where most programs leak. In a sample of 1,132 sub-processor pages monitored on our platform, 67% logged at least one change in a 90-day window. Across 7,551 active privacy policy monitors, 41% changed in the same window. Trust centers moved even faster: 62% changed in 90 days across 295 active monitors. An assessment that doesn't trigger ongoing watch is out of date within a quarter.

Vendor risk assessment template (what to download, what to build)

The honest answer: use an existing template before you build one.

- SIG Lite (Shared Assessments): the industry standard shorter questionnaire. Widely accepted, saves vendors from repeating work.

- SIG Core: the full SIG questionnaire. Use for tier-1 vendors in regulated industries.

- CAIQ (Cloud Security Alliance): cloud-native control questionnaire. Use for SaaS and IaaS vendors.

- NIST Cybersecurity Framework mapping: pair the questionnaire with CSF 2.0 function mapping (GV, ID, PR, DE, RS, RC) for audit defensibility.

Where building your own makes sense: the scoring rubric and the decision record, not the questionnaire itself. Your rubric should encode your risk tolerance (what makes a vendor "high" in cyber risk for your specific data classification). Your decision record should match your governance flow.

A working template set for a mid-market program:

- SIG Lite questionnaire (external).

- Your internal scoring rubric (5 domains, 3-point scale, justifications required).

- Your decision record template (accept / mitigate / reject with rationale, compensating controls, review cadence).

- Your risk register entry (vendor, tier, scored domains, controls, owner, next review).

Avoid over-engineering. A 3-tab Excel file covers it for most programs. Customization beyond that belongs in your GRC system, not a template.

Vendor risk assessment software (tools by company size)

Three tiers of tooling match the three realistic program sizes.

SMB (under 200 employees)

- Stack: spreadsheet + shared Drive + DocuSign + a lightweight VRM like Whistic or a self-managed SIG Lite process.

- Cost: typically under $500 per month.

- Break-even for dedicated software: ~10 new tier-1 vendors per quarter.

Mid-market (200-2,500 employees)

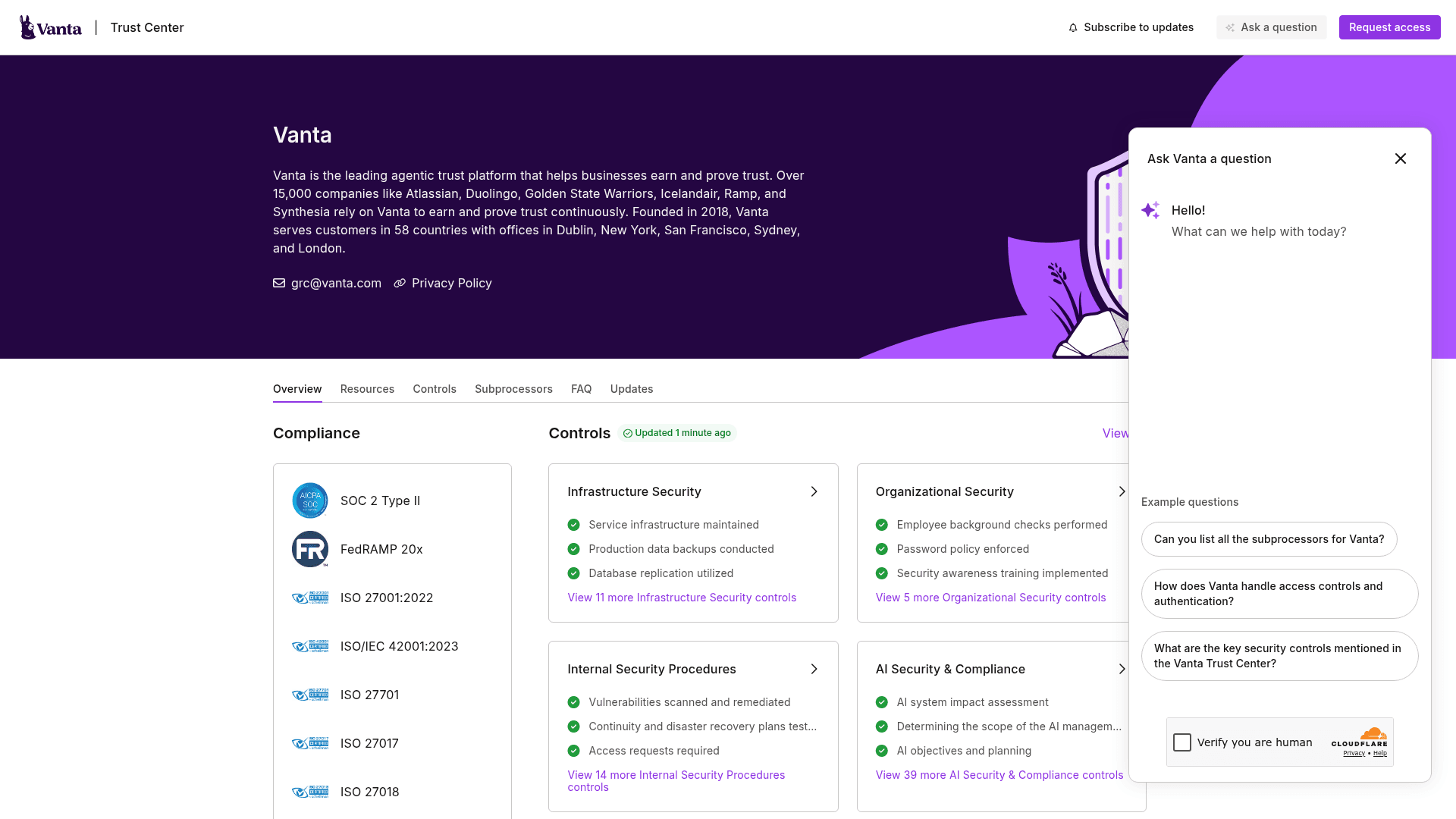

- Stack: dedicated VRM (Panorays, Venminder, Whistic, Prevalent) + a cyber ratings layer (BitSight, SecurityScorecard, UpGuard) + continuous monitoring (Visualping, Fluxguard) for the documentation surface.

- Cost: $25k-$150k per year across the stack.

- Break-even: 30-50 new tier-1 vendors per quarter.

Enterprise (2,500+ employees)

- Stack: full GRC platform (OneTrust, ProcessUnity, Archer, ServiceNow IRM) with automated evidence collection, board-level reporting, and integrations across ERP, identity, CLM, and cyber ratings.

- Cost: $150k-$1M+ per year.

- Break-even: continuous. At this scale, the cost of not automating exceeds the software licensing by orders of magnitude.

The category splits into three functional layers (intake/workflow, vendor risk management, continuous monitoring), and most mature programs run at least two layers because no single product covers all three well. The compliance monitoring software roundup covers the broader landscape adjacent to vendor-specific tools.

Where most vendor risk assessment programs break

Six failure modes repeat across programs. Each one has a narrow, specific intervention that fixes it without requiring a rebuild.

- The assessment is disconnected from the risk register. Fix: require that every completed assessment produces a risk register entry with a named owner, a review date, and the underlying evidence link.

- Questionnaire responses go stale. Fix: enforce a maximum age (90 days for SOC 2 Type II references, 12 months for ISO cert copies) and automate the re-request.

- Tier-1 vendors get the same assessment as tier-3. Fix: tiering gates depth. A Tier-3 vendor with no data access should never get the full SIG Core treatment.

- The decision record is a single sentence. Fix: require scored domains, evidence links, compensating controls, and a written rationale on every tier-1 decision. Auditors read these.

- Post-assessment drift is invisible. Fix: enroll tier-1 vendor DPAs, sub-processor lists, and trust centers in continuous monitoring. The automation workflows pattern that already runs other parts of risk routes these alerts into the register.

- There is no second set of eyes on high-risk acceptances. Fix: require security or privacy sign-off on any tier-1 vendor decision above a defined risk threshold.

Frequently asked questions

What is a vendor risk assessment?

A vendor risk assessment is a structured, point-in-time evaluation of a third-party vendor against your control requirements. It produces a scored risk profile across five domains (cyber, financial, operational, compliance, reputational), a written decision (accept / mitigate / reject), and a risk register entry with an owner, controls, and review cadence. It is run at onboarding, on renewal, when scope changes, after a vendor incident, and when continuous monitoring detects a material change.

What are the 5 things a risk assessment should include?

A credible vendor risk assessment includes: (1) a defined scope and tier, (2) evidence across the five risk domains, (3) a scored risk profile per domain with supporting justification, (4) a decision record with accept / mitigate / reject rationale, and (5) a risk register entry connected to ongoing monitoring. Assessments missing any of these five elements are harder to defend during audit and decay faster between reviews.

What is the vendor risk assessment process?

The process has five steps: scope and tier the vendor, collect evidence using an industry questionnaire (SIG Lite, CAIQ) plus artifacts, score risk by domain against your rubric, decide (accept / mitigate / reject), and document the decision in the risk register while enrolling the vendor's key pages in continuous monitoring. Each step has a named owner and a clear exit artifact.

How long does a vendor risk assessment take?

For a tier-3 commodity vendor, a lightweight assessment can complete in 1-2 days. For a tier-1 vendor touching sensitive data, a full assessment typically takes 2-5 weeks, driven mostly by evidence collection (Step 2). Programs that pull evidence from vendor trust portals rather than sending custom questionnaires compress this significantly.

What is the difference between vendor risk assessment and vendor due diligence?

Vendor due diligence is the evidence-collection step (gathering SOC 2 reports, DPAs, insurance certificates, financial statements). The vendor risk assessment is the evaluation of that evidence against your control requirements and the production of a scored, documented decision. In practice they are often treated as one process, but separating them clarifies ownership and makes both auditable.

What are the 5 risk assessment methods?

The five common methods are: qualitative scoring (Low/Medium/High per domain), quantitative risk analysis (probability × impact in dollars), hybrid methods (qualitative with quantitative anchors for high-risk items), control-based assessment (compliance to a framework like NIST CSF or ISO 27001), and continuous assessment (real-time scoring fed by external signals like cyber ratings and page-level monitoring). Most practical programs use a qualitative primary method reinforced by continuous signals.

What is the 3-vendor rule?

The 3-vendor rule is a procurement practice of soliciting bids from at least three qualified vendors before making a selection. It is a sourcing control, not a risk assessment method. It belongs in the early procurement intake stage, before the risk assessment is performed. In regulated or narrow-market categories, the rule is often waived with documented justification.

Do vendor risk assessments need to be redone for existing vendors?

Yes, on a scheduled cadence and in response to triggers. Tier-1 vendors: annually at minimum, with faster re-assessment after detected changes. Tier-2: every 18-24 months. Tier-3: on a long cycle (24-36 months) or only when triggers fire. The "static then stale" pattern is what continuous monitoring fills between scheduled reviews.

A complete vendor risk assessment is the gate, not the finish line.

For a program-level view of how this piece fits into the broader lifecycle, frameworks, and operating model, see the TPRM program guide.

Where to go next

The continuous vendor monitoring guide covers the post-assessment layer that keeps scored risk current. The vendor onboarding process shows how the assessment fits into the broader request-to-go-live flow. For the evidence-collection step specifically, the vendor due diligence checklist covers the 30-item artifact list. And for the sub-processor dimension in particular, the sub-processor selection guide walks through how to evaluate a new entry before it ever lands on a vendor's list.

This article summarizes industry practice for vendor risk assessment and the frameworks that underpin it. It is not legal, compliance, or audit advice. Specific regulatory obligations, scoring rubrics, and decision thresholds should be reviewed with your program's TPRM, privacy, and legal owners before relying on them.

Want to monitor web changes that impact your business?

Sign up with Visualping to get alerted of important updates from anywhere online.

The Visualping Team

The Visualping Team is the content and product marketing group at Visualping, a leading platform for website change detection and competitive intelligence. We write about automation, web monitoring, and tools that help businesses stay ahead.