Agent Search Optimization: Your Website's Third Audience

By Eric Do Couto

Updated March 28, 2026

Most teams are optimizing for the wrong audience.

For 30 years, websites served two audiences: people and search engines. Every SEO playbook, every CRO test, every A/B experiment assumed a human was on the other end. That assumption is breaking.

A third audience just showed up, and it doesn't scroll, doesn't read your hero copy, and doesn't care about your brand story. AI agents are browsing the web for users now: evaluating products, comparing prices, reading documentation, and recommending which vendor to buy from. They care about one thing: whether your website is structured clearly enough for a machine to read and evaluate.

Agent search optimization (ASO) is the practice of making your website discoverable, evaluable, and actionable by these agents. On March 20, 2026, Google added a new user agent called Google-Agent to its official documentation. The W3C is building infrastructure (called WebMCP) to make agent-site interactions a formal web standard.

Your website either works for these machines, or it gets skipped for a competitor's that does.

What Is Agent Search Optimization?

Here's the problem most teams don't see yet. They've spent years getting found (SEO) and getting cited (AEO). Those are table stakes now. Agent search optimization adds the layer they're missing: making your website usable by machines that act on a user's behalf.

Three layers, each building on the last:

| Layer | Goal | Example |

|---|---|---|

| SEO | Be found | Your page appears in Google search results |

| AEO/GEO | Be cited | ChatGPT or Perplexity mentions your brand in an answer |

| ASO | Be usable | An AI agent compares your pricing plans and recommends one to the user |

Each layer builds on the one below. Strong SEO is the floor. AI visibility requires authority and clear entity signals on top of that. And agentic traffic requires clean, structured, well-labeled web experiences on top of everything.

The Princeton KDD 2024 study found that strategies like citing sources and adding statistics can boost content visibility by up to 40% in generative engine responses. The same principle scales up to ASO: structured, machine-readable content is the price of admission.

Why Agent Search Optimization Matters Now

The groundwork was laid in December 2025, when the Linux Foundation launched the Agentic AI Foundation with AWS, Anthropic, Google, Microsoft, and OpenAI as platinum members. They contributed shared standards instead of competing ones. Three developments since then have moved ASO from concept to reality.

Google-Agent is live

On March 20, 2026, Google added Google-Agent to its user-triggered fetchers documentation. Project Mariner is the first named example: a research prototype that acts as an AI agent within Chrome, completing tasks for users.

The distinction from Googlebot matters. Googlebot crawls your pages in the background for indexing. Nobody asked it to. Google-Agent visits your site because a real person asked an AI to do something: compare prices, fill out a form, research vendors. User-triggered, action-oriented, and already rolling out.

AI agents are shipping as products

Google-Agent isn't an isolated experiment. Multiple companies have shipped agent-based browsers with real users:

| Product | Company | Launched | Key Capability |

|---|---|---|---|

| Chrome Auto Browse | Jan 2026 | Gemini-powered autonomous browsing | |

| Atlas (Agent Mode) | OpenAI | Oct 2025 | Multi-step task execution |

| Comet | Perplexity | Jul 2025 | Search-first agentic browsing |

| Project Mariner | Google DeepMind | Mar 2026 | Task completion within Chrome |

These are real products with real users. As Google's head of search Liz Reid noted: "I do think that probably means there's a world in which a lot of agents are talking with each other." The websites that make it easy for these agents to complete tasks will capture this traffic. The websites that don't will watch it flow to competitors.

WebMCP is becoming a standard

WebMCP (Web Model Context Protocol) is a proposed browser-level web standard that lets any webpage declare its capabilities as structured, callable tools for AI agents.

Right now, agents interact with websites by parsing raw HTML, taking screenshots, and guessing which button does what. If a redesign moves a single element, the agent breaks.

WebMCP fixes this. Agents call structured functions that your website explicitly declares, no guesswork required. Google's Chrome team and Microsoft's Edge team are building it together through the W3C Web Machine Learning Community Group. Both browsers should support it by late 2026.

How Agent Search Optimization Works

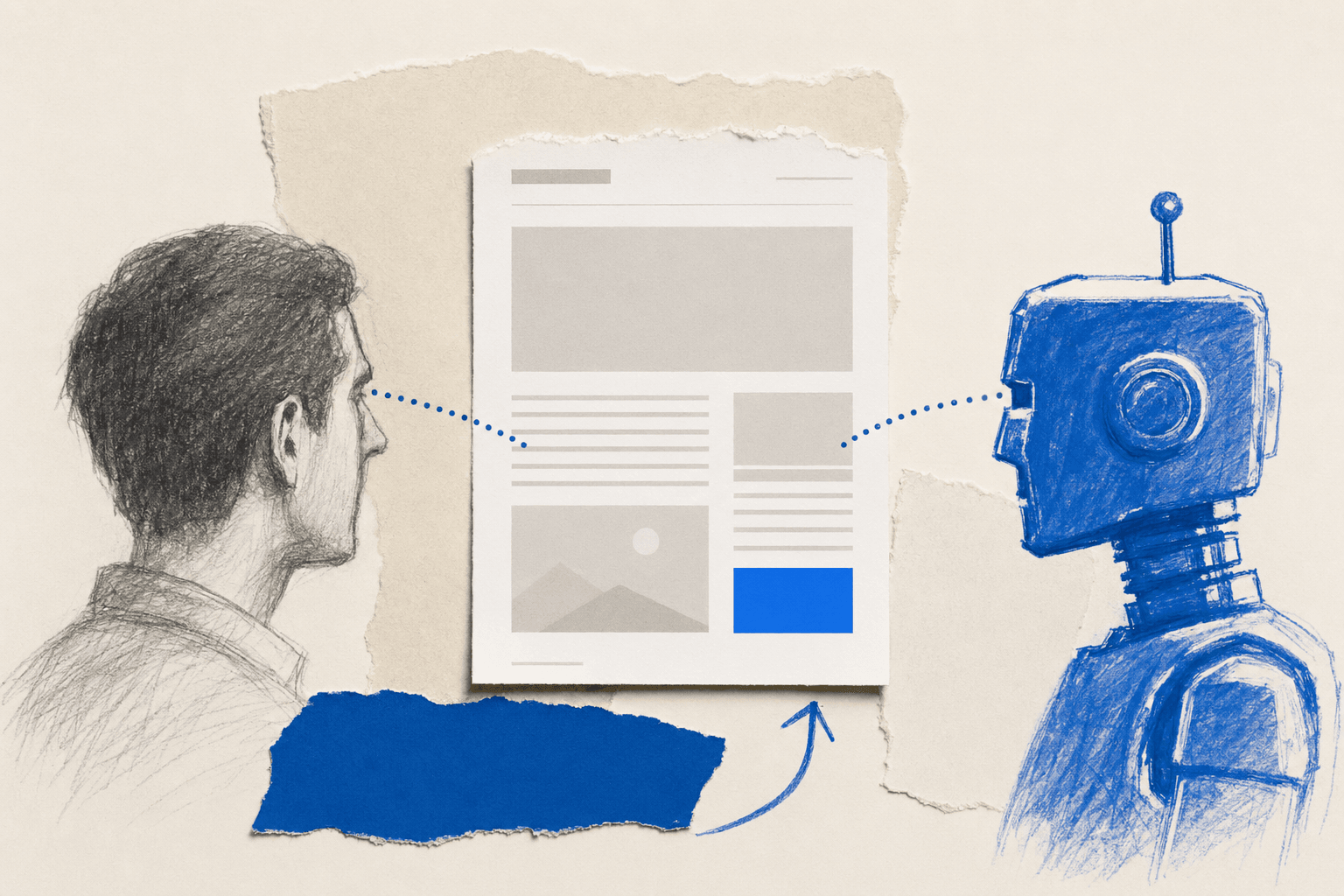

ASO operates at three levels: discoverability, evaluability, and actionability.

Level 1: Discoverability (can agents find you?)

This is the SEO foundation. Agents rely on search indexes to find candidate websites. If you're not indexed, you're invisible to agents too.

The basics:

- Allow AI crawlers in your

robots.txt(GPTBot, PerplexityBot, ClaudeBot, Google-Agent) - Use structured data (Article, FAQPage, Product schemas) so agents understand your content type

- Maintain a clean sitemap that includes all pages you want agents to discover

- Don't block CDN or WAF rules that might reject legitimate AI user agents

Level 2: Evaluability (can agents understand you?)

Once an agent finds your site, it needs to decide whether your product matches what the user asked for. Over 15,000 Visualping users already report competitive intelligence as their primary use case. Agents do the same thing at machine speed, comparing multiple options simultaneously. The sites that make evaluation easiest win. (The sites that hide pricing behind a "Contact Sales" form lose before the agent even tries.)

Make your value proposition machine-extractable:

- Include pricing on the page (agents compare on behalf of users)

- Use comparison tables with specific numbers, not vague descriptors

- Write self-contained paragraphs. Each one should make sense if pulled out of the article entirely

- Include a clear "what you get" summary near the top of key pages

Level 3: Actionability (can agents complete tasks?)

This is where ASO goes beyond SEO and AEO. Can an agent actually use your site to answer a question, like compare pricing plans or check product availability?

WebMCP introduces a mechanism for this, and it's part of a broader stack. Google's developer guide to AI agent protocols outlines several standards working together: MCP for backend data access, A2A (Agent2Agent) for bot-to-bot communication, and UCP (Universal Commerce Protocol) that lets a machine buy your product directly from search results. And the payment layer is already taking shape: MPP (Machine Payments Protocol), built by Tempo and Stripe, lets agents pay per request in the same HTTP call, no API keys or accounts required. WebMCP is the browser-facing piece. Websites add toolname and tooldescription attributes to existing HTML forms, turning them into structured APIs that agents can discover and call.

An important distinction: not every page should be agent-accessible. Signup forms, checkout flows, and account creation should keep their guardrails (captchas, authentication). Agents that can create accounts freely are a vector for bot abuse and competitor sabotage. The pages to optimize for agents are the ones they need to read, not act on: pricing comparisons, product features, plan details.

For example, a product search tool might look like this with WebMCP:

<form toolname="comparePlans"

tooldescription="Compare monitoring plans by checks, pages, and price">

<label for="pages">Number of pages to monitor</label>

<input id="pages" name="pages" type="number" required />

<label for="frequency">Check frequency needed</label>

<select id="frequency" name="frequency">

<option value="daily">Daily</option>

<option value="hourly">Hourly</option>

<option value="5min">Every 5 minutes</option>

</select>

<button type="submit">Show matching plans</button>

</form>

The agent doesn't need to guess which fields to fill or which button to press. The form declares its purpose, its inputs, and its expected behavior. And because it's a read operation (comparing plans, not creating an account), there's no abuse risk.

If your website already has clean, well-structured HTML on its evaluation pages (pricing, features, product comparisons), you're 80% of the way to WebMCP readiness. The heavy lifting is good form hygiene, which is technical SEO fundamentals.

Go deeper: How to Monitor Your Brand in ChatGPT | AI-Powered Competitor Monitoring | What Is Content Monitoring?

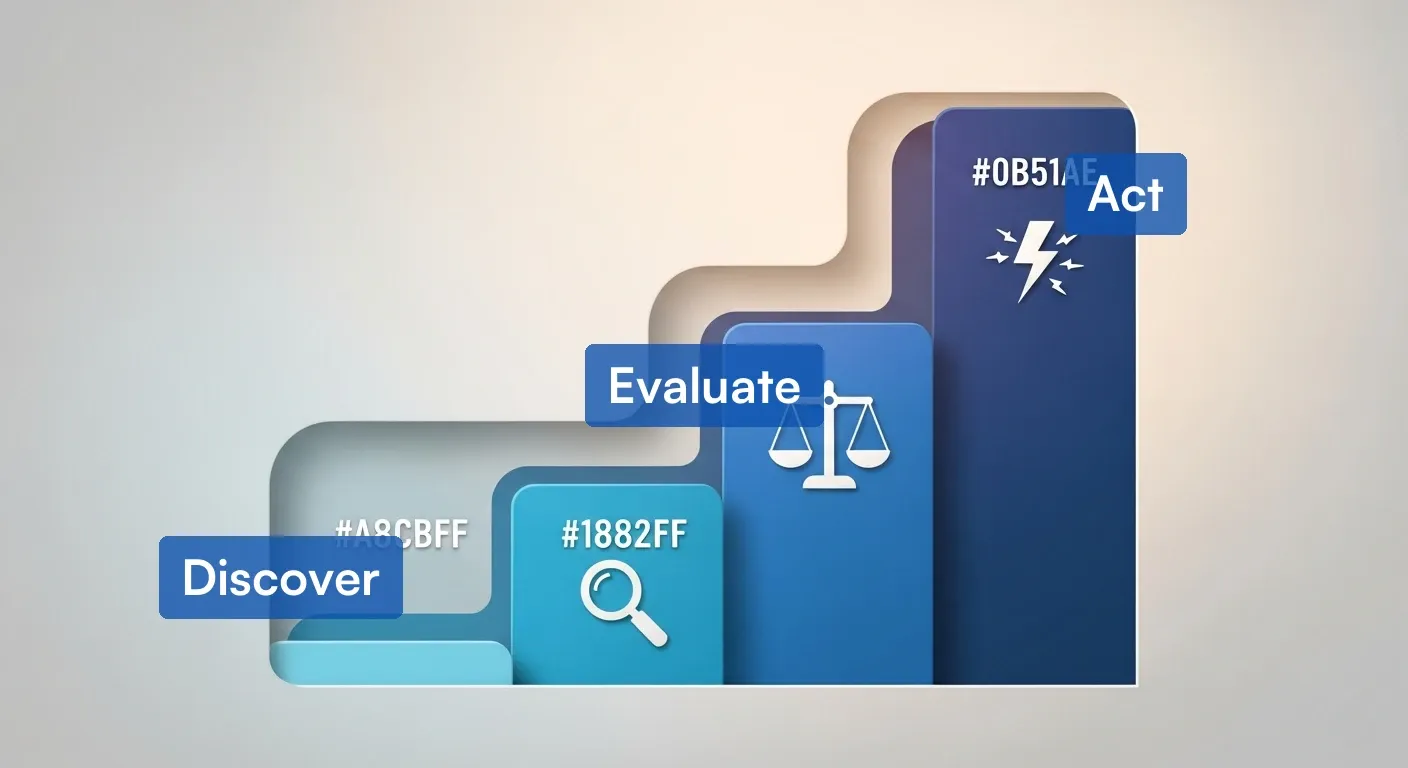

How ASO Differs from SEO and AEO

Most optimization frameworks stop at "get found" and "get cited." That's like building a storefront with great signage and no door. The table below shows where ASO fills the gap.

| Dimension | SEO | AEO/GEO | ASO |

|---|---|---|---|

| Audience | Search engine crawlers | LLMs generating answers | AI agents completing tasks |

| Goal | Rank in search results | Get cited in AI answers | Enable task completion |

| Content focus | Keywords, links, authority | Self-contained paragraphs, citations, expert quotes | Structured forms, clear labels, stable URLs |

| Key metric | Organic traffic | AI mention share | Agent task completion rate |

| User agent | Googlebot, Bingbot | GPTBot, PerplexityBot, ClaudeBot | Google-Agent, browser agents |

| Time horizon | Established (20+ years) | Growing (2-3 years) | Emerging (months old) |

ASO doesn't replace SEO or AEO. It extends them. You need all three layers working together. (If you're only doing the first two, you're building half a funnel.)

What to Do Right Now: A 7-Step ASO Checklist

The WebMCP spec is early and will change. That's fine. The foundations you build today carry forward regardless of how the standard evolves. Every item on this list makes your site better for humans too.

1. Track Google-Agent in your server logs

Filter your server logs for the Google-Agent user agent string. Volume will be low (the rollout began March 20, 2026), but a baseline now gives you context as traffic scales.

How you check depends on your hosting setup:

- Self-hosted (Nginx/Apache): SSH into your server and search your access logs. On most Linux servers, that's

grep -i "Google-Agent" /var/log/nginx/access.logor the equivalent Apache path. - WordPress on shared hosting: Log into your hosting control panel (cPanel, Plesk) and look for "Raw Access Logs" or "Access Logs" under the metrics section. Download the log file and search it for "Google-Agent."

- Cloudflare (Pro plan or higher): Use the Security Analytics dashboard and filter by user agent string.

- AWS CloudFront: If you have log delivery enabled to S3, query them with Athena. Filter the

useragentcolumn forGoogle-Agent. - Shopify, Wix, or Squarespace: These platforms don't expose raw server logs. Your best option is a third-party analytics tool that captures bot traffic, or ask your platform's support team if they offer user agent reporting.

To verify a request is genuinely from Google (not a spoofer), cross-reference the requesting IP against Google's published IP ranges for agents.

Once you see hits, look at which pages agents are visiting. If they keep landing on your pricing page, that's a signal to make pricing machine-readable (structured data, clear plan names, specific numbers). If they're hitting your signup form, that's the page to audit for WebMCP readiness first.

2. Update your robots.txt

Allow all AI agents alongside traditional crawlers:

# AI Search Bots

User-agent: GPTBot

Allow: /

User-agent: ChatGPT-User

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: ClaudeBot

Allow: /

# AI Agent Fetchers

User-agent: Google-Agent

Allow: /

3. Audit your key user flows

Identify the 5 to 10 most important actions on your site: lead forms, booking flows, product searches, checkout, support tickets.

For each, ask:

- Are the HTML labels clear and descriptive?

- Are the input types predictable (email fields use

type="email", URLs usetype="url")? - Are redirects stable after form submission?

- Is the form clean HTML, or is it buried in JavaScript that an agent can't parse?

4. Check your CDN and WAF rules

Bot protection rules built to stop scrapers can accidentally block legitimate AI agents. (This happens more than you'd think.) Review your CDN and WAF configurations to make sure Google-Agent and other known AI user agents can get through.

5. Add structured data to key pages

Agents rely on structured data to understand what a page offers. (If you're new to this, our guide on SEO monitoring covers the basics.) At minimum:

- Article schema on blog posts (with

datePublishedanddateModified) - FAQPage schema on pages with Q&A content

- Product schema on product and pricing pages

- Organization schema on your about page

6. Make your content machine-extractable

Write self-contained paragraphs that make sense if pulled out of context. Use specific numbers instead of vague claims. Build comparison tables with concrete values. Put pricing on the page rather than hiding it behind a "Contact Sales" form.

An agent evaluating your product will compare you against competitors in seconds. Competitor tracking already matters for humans. For agents, it's even more decisive: the site with clear, specific, structured information wins.

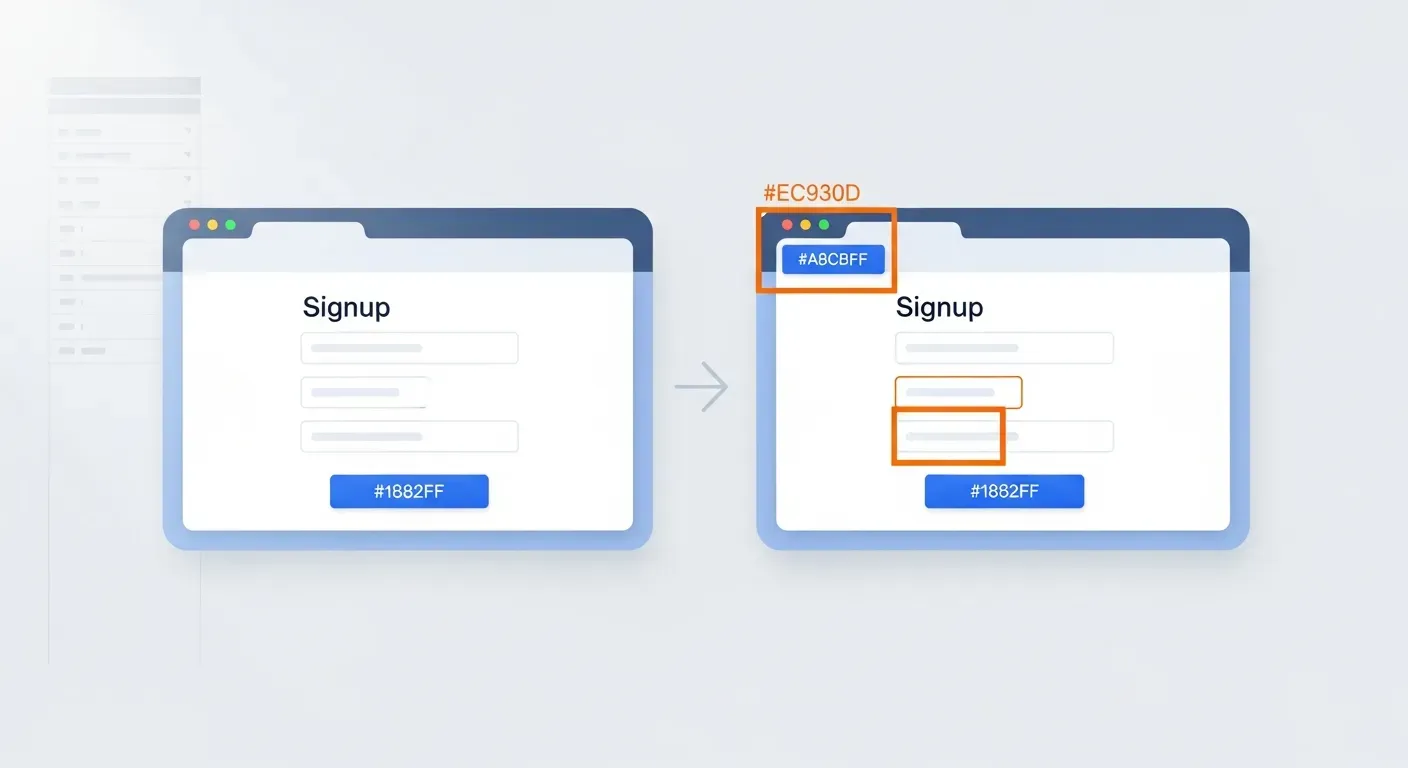

7. Monitor your site for changes that break agent flows

This is the step every other ASO guide misses. Agents need stable, predictable site structures. A redesign that moves a form element, renames a button, or changes a redirect URL will break an agent's workflow silently.

The trend is already visible in the data. Across the Visualping platform, new monitors on pricing, signup, and checkout pages surged 66% quarter-over-quarter. Signup page monitoring alone jumped 116%. Businesses are waking up to the fact that these pages change constantly, and the cost of missing a change is climbing.

That cost gets worse with agents. 40% of pricing and signup page changes happen between midnight and 8 AM UTC. An agent browsing at 3 AM on a user's behalf hits a just-changed page that no human has reviewed yet. The form moved. The button renamed. The agent fails silently, recommends a competitor, and the user never knows. You'll never see the lost conversion in your analytics. It just vanishes.

Website change detection catches these breaks before they cost you. Set up monitoring on your key conversion pages (signup, pricing, checkout, contact forms) and get alerted the moment structural changes happen.

This Is the Responsive Design Moment for AI

Here's a pattern worth studying. When mobile arrived, every company knew it mattered. Most waited. The sites that adopted responsive design early won the distribution game. Late movers scrambled to catch up while traffic shifted permanently.

ASO follows the same arc, but compressed. Mobile adoption played out over years. AI agent adoption is playing out in months. Chrome Auto Browse launched in January 2026. Google-Agent appeared in documentation in March. WebMCP browser support should land by late 2026. Three events, six months.

The companies already using AI-powered competitor monitoring to track how rivals adapt will see this shift first. And this time, Google, Microsoft, and the W3C are building the infrastructure together. There's no question of whether it's happening. The only question is whether your site is ready when the agents arrive.

For a deeper look at the mindset shift, Marie Haynes' analysis of the agent-to-agent vision is worth reading.

Frequently Asked Questions

What is agent search optimization?

Agent search optimization (ASO) is the practice of making your website discoverable, evaluable, and actionable by AI agents acting for users. It extends traditional SEO and answer engine optimization: beyond getting found and cited, ASO ensures agents can complete tasks on your site.

What is the Google-Agent user agent?

Google-Agent is a user-triggered fetcher that Google added to its official documentation on March 20, 2026. Unlike Googlebot (which crawls pages in the background for indexing), Google-Agent visits your site because a real person asked an AI agent to do something on their behalf. Project Mariner is the first named example.

How is ASO different from SEO?

SEO helps search engines find and rank your content. ASO ensures AI agents can find your content, evaluate it against competitors, and complete actions on your site. SEO targets crawlers (learn how to track SEO changes on your website). ASO targets agents that browse, compare, and act.

What is WebMCP?

WebMCP (Web Model Context Protocol) is a proposed W3C browser-level standard developed by Google's Chrome team and Microsoft's Edge team. It lets websites declare their capabilities as structured, callable tools for AI agents. Instead of agents guessing what your forms do, WebMCP lets you tell them explicitly.

Do I need to rebuild my website for AI agents?

No. If your website already has clean HTML forms with clear labels, predictable inputs, and stable redirect URLs, you're most of the way there. WebMCP adds lightweight attributes (toolname, tooldescription) to existing form elements. The bigger risk is unmonitored changes. 304 companies on the Visualping platform already monitor 3 or more conversion-critical pages systematically, averaging 85 pages each. Businesses check pricing pages every 2.8 hours (median). They treat these pages like live production systems because that's what they are. Content monitoring catches these shifts automatically.

How do I check if Google-Agent is visiting my site?

Filter your server logs for the user agent string "Google-Agent." Verify authenticity by checking the IP against Google's published IP ranges. Volume is low during the initial rollout, but a baseline now gives you context as agent traffic scales.

Want to monitor web changes that impact your business?

Sign up with Visualping to get alerted of important updates from anywhere online.

Eric Do Couto

Eric Do Couto leads Marketing at Visualping, where he builds the systems that connect website change data to marketing. He writes about competitive monitoring, AI-driven optimization, and the tools that help teams move faster.